SoD Toolkit

Project Description

With newer sensors and forms of computing devices coming out, ubiquitous environment has become increasingly commonplace to allow researchers design and implement novel interaction possibilities. When used collectively with other devices and sensors, the capabilities of these devices can be expanded significantly. Though, researchers still face the implementation, instrumentation, and cost barriers before being able to take advantage of the additional capabilities. In this project, we introduce a toolkit that facilitates the exploration and development of multi-device interactions, applications and ubiquitous environments by using combinations of low-cost sensors to provide spatial-awareness.

The toolkit offers three main features. (1) A “plug and play” architecture for seemless multi-sensor interaction that allows novel exploration and ad-hoc setups of ubiquitous environments. As we began to develop toolkit with our industry partners, we relied on Microsoft Kinect sensors exclusively. However, when developers deployed the system built with the toolkit and received feedback from system users about the general uncomfortableness of multiple highly visible tracking sensors in an environment. We changed the toolkit from single sensor rigidity to multi-sensor flexibility. (2) Client libraries that integrate natively with serveral major devices and UI platforms. SoD-Toolkit builds upon general concept of toolkits, and further extends the work with greater support for diverse set of devices and sensor platforms, multi-sensors mapping, and the ability to explore novel multi-device interaction combination. (3) Unique tools that allow prototyping interactions without a need for people, sensors, rooms or devices.We allow researchers and developers to explore multi-device, multi-sensor ubiquitous environments without the need for all hardwares or sensor components. The physical reliance on sensor and device hardwares has been reduced.

Project Images

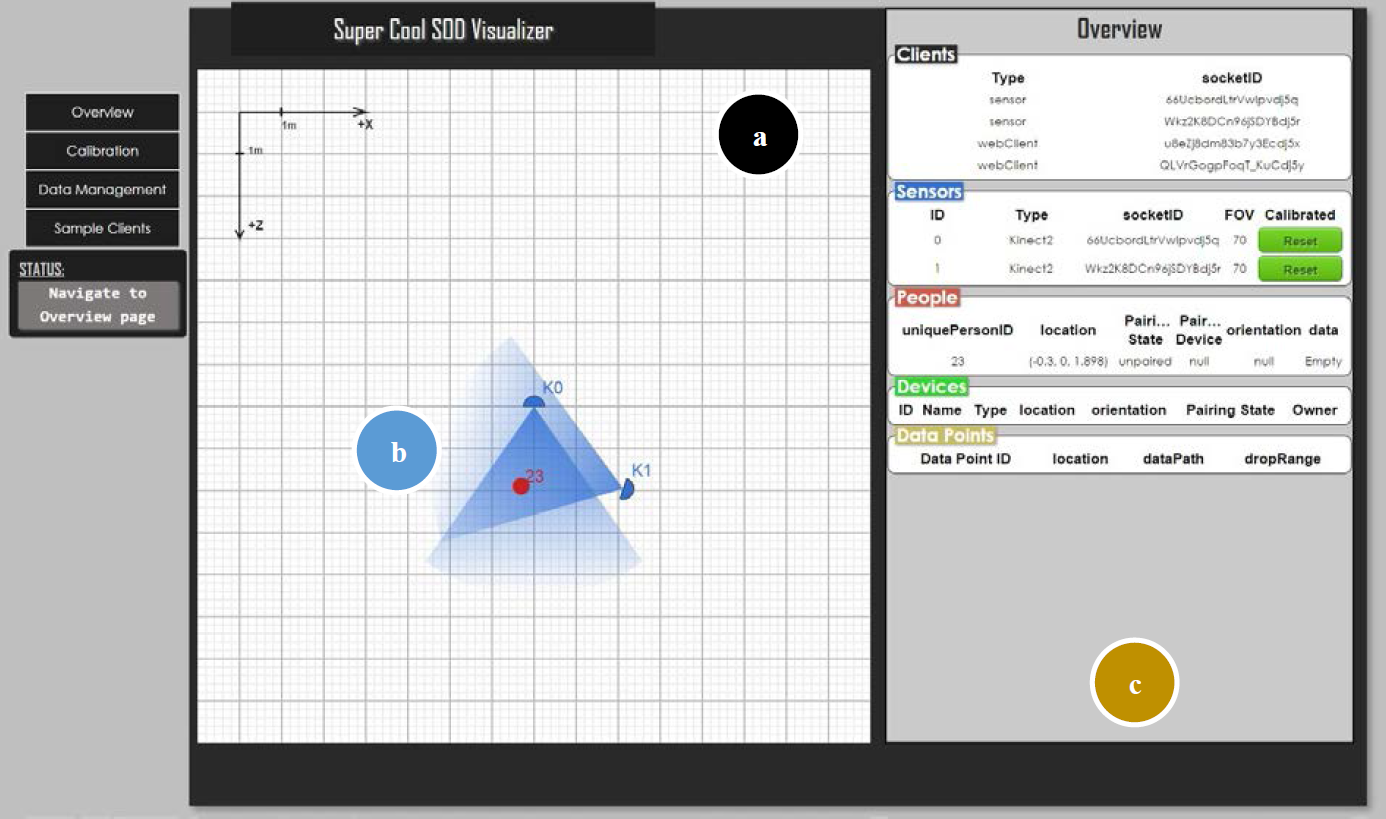

The visualization and prototyping tool for the SoD-Toolkit

(a) The tracked environment, (b) Representation of entities tracked in the environment, © List of available entities and their current state, as well as clients currently connected in the environment.

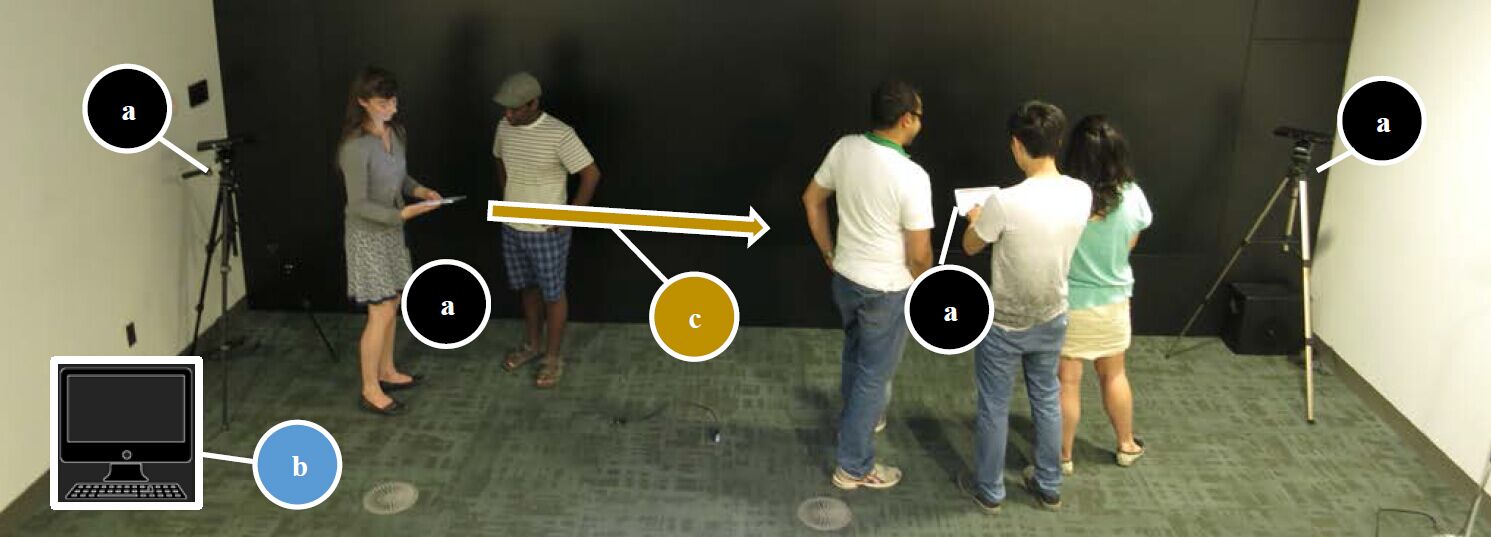

(a) numerous devices and sensors that are supported by (b) software tools and components providing information that facilitates © spatial interactions between devices and the environment.

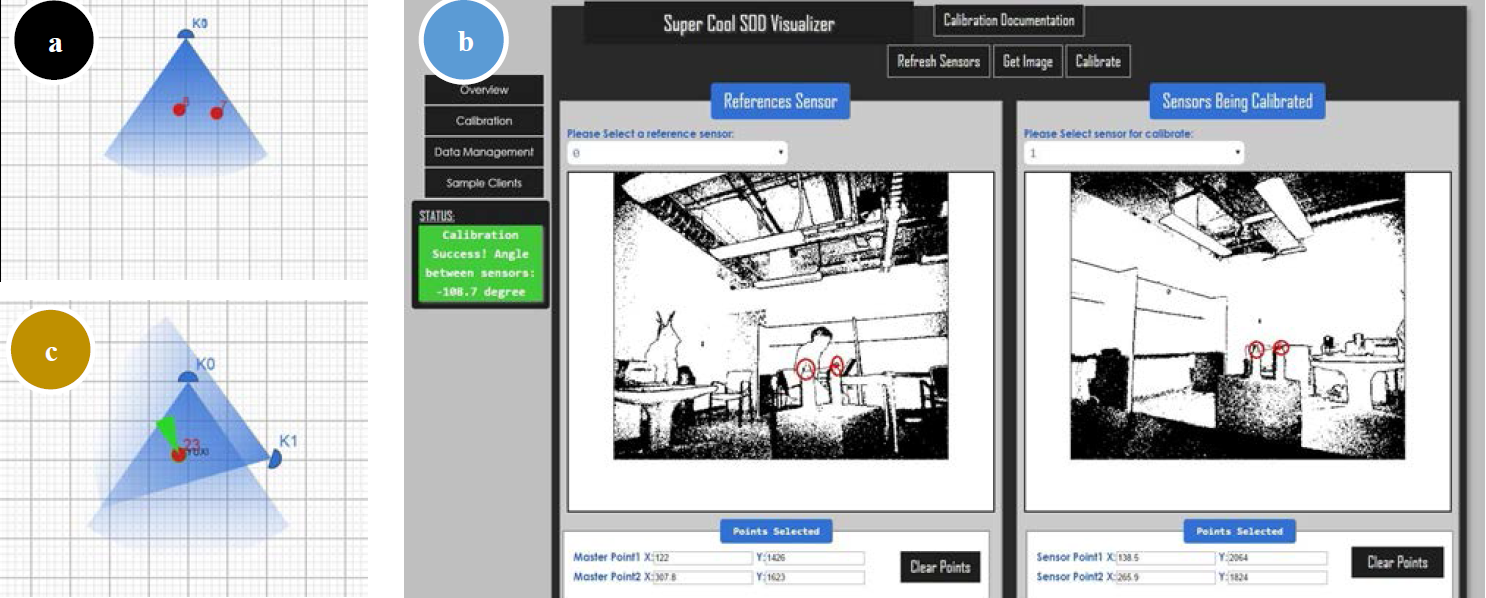

Sensor Fusion approach with multiple Kinect sensors

(a) The state of the environment with two uncalibrated Kinect sensors (overlapping) showing two skeletons being tracked in the environment. (b) The interface for calibrating multiple kinects. © Properly calibrated environment with overlapping Kinect areas, a single tracked user paired with a device and showing orientation.